With AI as a development partner, things accelerated fast. I needed more hardware — a Pi wasn’t enough anymore. I built my first ever PC: Ryzen 7 5700X, 64GB RAM, RTX 3060 12GB. Spec’d the parts with AI, assembled it myself. Containers started growing — Home Assistant, Frigate, MQTT, cameras, dashcam, local LLMs running on the GPU. Every week something new was running.

My use of AI was maturing too. I’d gone from using it like Google, to having it write code I’d paste into nano, to understanding context windows and prompt structure. Then I found Claude Code — AI in the terminal, seeing my files, taking plain English and making real changes. Another acceleration.

And then OpenClaw came out. I looked at it and thought — this just does what I’m already doing with Signal-CLI. What am I missing? But it bugged me. I couldn’t let it go. I hated the privacy aspect — data flowing through services without proper guardrails. AI agents were getting powerful, but who was watching them?

I started researching. AI security, trust boundaries, how to constrain what an agent can do. That research led me to a paper about CaMeL — Capabilities and Machine Learning. The idea that you can build an AI system where the agent can’t do anything you didn’t explicitly approve. Where the worker never sees raw user input. Where every action passes through security scanning before it executes.

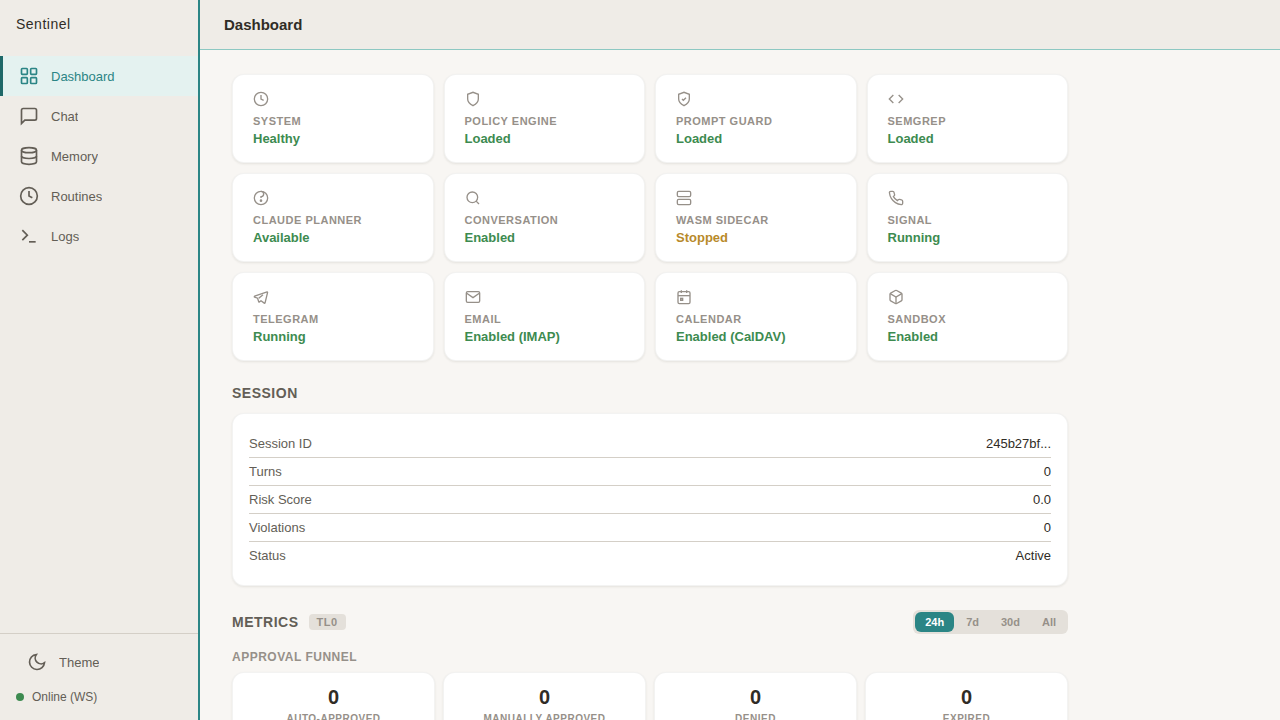

That was the spark. CaMeL became the architecture. The architecture became Sentinel.